Enterprises need an organized database to achieve business goals and ensure that the IT infrastructure is aligned with their organizational objectives. This will help the database administrator to maintain and manage a database without any issues. Database management demands time and resources. A well-tuned database is easier to navigate and frees up IT resources, thereby, reducing costs for the enterprise.

It is easier to extract information, analyze and report it if the database is well organized. A well-tuned database also ensures that the extracted data is accurate. It is easier to recover data from performance-tuned databases after a disaster or a security breach.

Let us quickly understand what database performance tuning means.

Tuning a database refers to a group of activities Database Administrators (DBAs) execute to ensure databases operate smoothly and efficiently. It helps end-to-end re-optimization of a database system from software to hardware. Tuning accelerates query response, improves indexing, deploys clusters, and reconfigures Oracle Servlet Engines (OSEs) as per their usability and the demands of the end-user experience. MySQL and Oracle are two main examples of Database Management Systems (DBMS) which are usually subject to database tuning.

Understanding Oracle Query Performance

Query performance optimization lays the foundation of high database efficiency. Collecting information on base tables helps enable ‘Oracle Text’ that in turn chooses the most efficient query execution plan. The Oracle Text ‘tracing facility’ identifies issues with indexing and querying. Partitioning data and structuring local partitioned indices also boost query performance. Other ways to performance-tune queries in Oracle can be carried out by inner joins over outer joins, indexing predicates, and rewriting subqueries with Global Temporary Tables (GTT).

What is Database Performance Tuning?

Performance tuning of Oracle databases includes optimizing SQL statements and query execution plans such that requests are handled more efficiently. The level of how organized the database is determines the way in which SQL statements respond to queries. They accordingly use up the right resources when an application communicates with the database. Poorly optimized SQL statements pressurize the database to work that much harder to retrieve information, thereby, consuming more resources than required. This also increases the chances of it affecting user experience on connected applications.

Response Time and Throughput

Response time is the amount of time taken by a database to complete a request while system throughput tracks the number of processes completed in a given period of time.

If the response time is high, it means the application is automatically providing a slow user experience. On the other hand, low system throughput means there are limited resources available to manage a small number of tasks in a short time. An administrator must be able to optimize an Oracle database in line with the business goals and the type of applications in use.

While tuning up the database for fast response times speeds up handling user queries it may compromise other tasks in the workload. On the other hand, gunning a high throughput can help optimize the performance of the entire workload to support a larger output of transactions per second. But this might not necessarily speed up individual queries.

What stands out to make a difference is the type of applications in use. If a business uses an Online Transaction Process (OLTP) application, the throughput is used to measure performance. This is because the business handles a high volume of transactions through the application. In the event of using a Decision Support System (DSS) with users running queries continuously then performance is measured by response time.

Proactive Monitoring & Eliminating Bottlenecks

Proactive monitoring checks the database continuously to discover and address performance issues. It is different from acting or taking measures to mitigate the damage after an incident. This helps catch inefficiencies before they turn into bigger problems later. Let us remember that sometimes this may be risky as changes made by an administrator can affect the database performance. This can be mitigated if administrators act cautiously before making changes.

Bottleneck elimination is a reactive process where the administrator identifies a bottleneck and proceeds to fix the same depending on the root cause. Recoding SQL statements is the first means to handle internal bottlenecks. Post this, the administrator can proceed to address external issues like CPU and storage performance.

Transaction volumes grow with business and this piles on the database. If the database is not performing effectively, it cannot handle unexpected peaks in the business. An increase in database demand translates to a slower database or that which buckles under heavy loads. Partner with Gemini Consulting & Services to tune the database and align it with business goals. Contact us to know how database tuning works and stay prepared to handle changing business objectives.

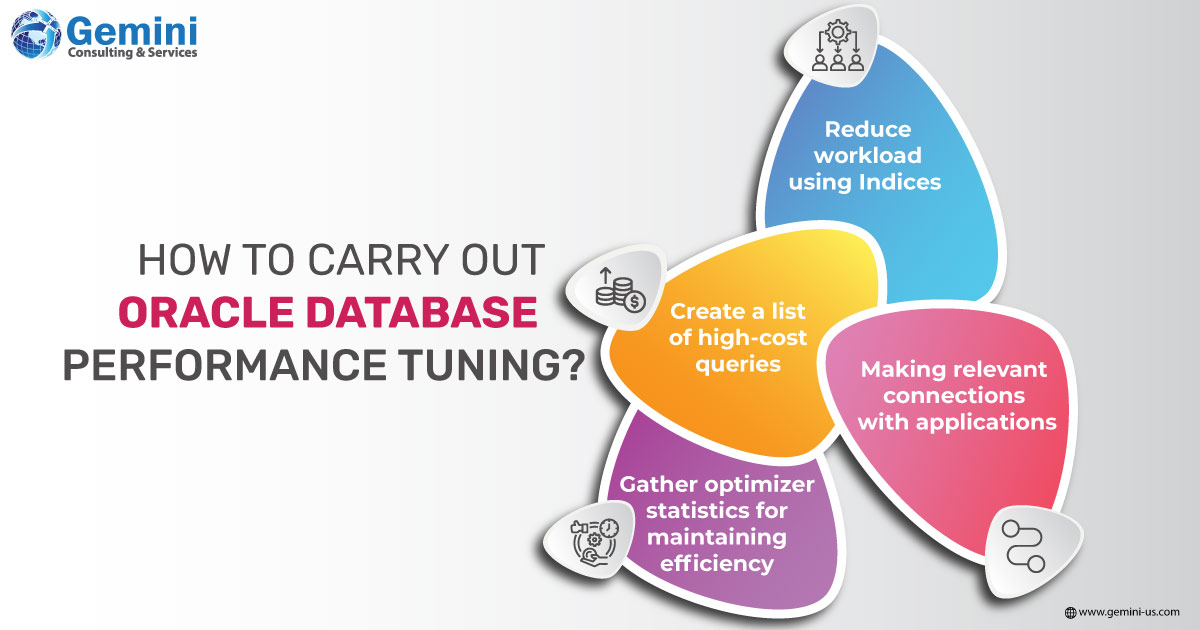

- Make a list of high-cost queries

Instead of optimizing every line of code, it is prudent to focus on the most used SQL statements that have the largest database/ I/O footprint. This can be done by using Oracle database monitoring tools such as Oracle SQL Analyze, which helps identify resource-intensive SQL statements.

- Reduce workload using indices

Making the same query in different ways and accordingly writing the code for the most sensible query helps minimize the workload. If all that it takes is a single snapshot of data, there is no point in processing thousands of rows that are irrelevant. To easily sustain a large workload, indexes can be used to access small sets of rows as against processing the entire database at once. Indices could be used in those scenarios where a column is regularly queried.

- Making relevant connections with applications

Poor performance can also come from connections getting dropped between the application and the database. The application needs to be checked if it has been configured correctly for it to form a connection to the database to access a table and automatically drop it when the task is done. However, abruptly dropping the connection affects performance. What needs to be done is to set up a stateful connection to ensure that the application is always connected to the database. Maintaining this connection will also ensure that system resources are not wasted each time the application interacts with the database.

- Gather optimizer statistics for maintaining efficiency

Optimizer statistics describe a database and its objects and are used by the database to choose the best execution plan for SQL statements. Collecting optimizer statistics helps databases maintain accurate information on table contents. Inaccurate data fetches a poor execution plan, which will in turn affect the end-user experience. Oracle databases automatically collect optimizer statistics. This can be deployed manually using the DBMS_STATS package.